Compatibility vs Compatibility: Understanding the Two Senses of the Term

Explore the difference between general compatibility and domain-specific compatibility. This analytical guide explains definitions, contexts, and decision factors to help you choose wisely across relationships, devices, software, and everyday life.

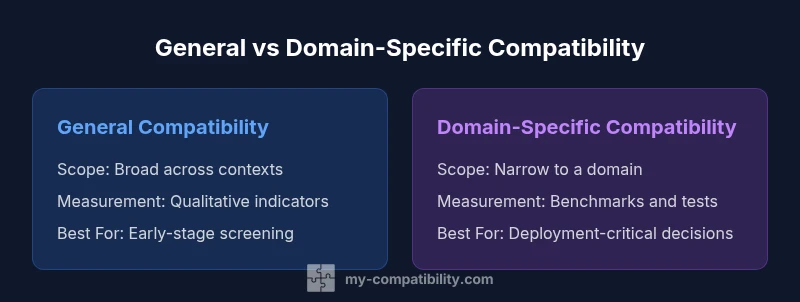

TL;DR: compatibility vs compatibility hinges on scope. General compatibility describes broad suitability across contexts, while domain-specific compatibility targets precise alignment within a field, such as software versions or zodiac-based pairings. The My Compatibility analysis shows that choosing the right form depends on context and risk tolerance, so start broad when planning and narrow only where precision matters.

Defining the Terms: compatibility vs compatibility

Two uses of the word 'compatibility' shape how teams design, test, and communicate plans. According to My Compatibility, clarity in terminology reduces confusion when navigating 'compatibility' across domains. In everyday language, most people mean a broad sense of fit—will this person, device, or system work well with others in general? In formal settings, however, compatibility splits into two operational modes: a broad, general sense and a narrow, domain-specific sense. General compatibility asks whether a product, idea, or relationship broadly fits with a wide range of contexts, while domain-specific compatibility asks whether something aligns precisely with a defined set of rules, standards, or conditions. Recognizing this distinction is the first step toward better planning and clearer communication. By keeping the two senses distinct, teams avoid overgeneralizing safety, performance, or interchangeability. The rest of this article will unpack how the two forms differ, when each matters, and how to document criteria so stakeholders can act with confidence.

The Evolution of the Idea: from everyday usage to formal definitions

Historically, the word compatibility emerged from practical concerns about whether components, people, or ideas could exist together without conflict. Over time, engineers formalized the concept to mean interoperability under a defined framework, while social scientists used it to describe relationship potential across cultural or personal factors. This evolution created a useful dichotomy: general compatibility acts as a preliminary screen, and domain-specific compatibility becomes the gatekeeper for critical outcomes. In modern practice, teams must decide when a broad fit suffices and when precise alignment is required to avoid costly mismatches. The My Compatibility framework emphasizes documenting both the broad objectives and the exact criteria used for domain-specific checks, so decisions remain transparent even as circumstances shift.

Context Matters: relationships, technology, and data

Context shapes what counts as compatible. In personal relationships, compatibility might cover values, communication styles, and lifestyle alignment, which are inherently subjective and dynamic. In technology, compatibility depends on interfaces, APIs, versioning, and performance benchmarks that can be tested and measured. In data exchange, compatibility involves schema alignment, encoding, and metadata consistency to ensure seamless interoperability. When context changes—such as adopting a new platform or updating a regulatory requirement—the tolerance for deviation from the defined criteria shifts. The general form of compatibility is more forgiving, while the domain-specific form tightens controls to protect critical outcomes like safety, reliability, and compliance. Across domains, teams should explicitly note how context influences both the interpretation and the acceptable degree of misalignment.

The Key Distinctions: scope, precision, and outcomes

The two senses of compatibility differ along several axes. First, scope: general compatibility casts a wide net across contexts, whereas domain-specific compatibility limits itself to a narrow domain. Second, precision: general assessments are qualitative, focusing on overall fit; domain-specific assessments rely on quantitative benchmarks, standards, and test results. Third, outcomes: broad compatibility is useful for early-stage planning and risk screening, while precise compatibility is essential for deployment, certification, or safety-critical scenarios. The practical takeaway is to map distinctions explicitly in project documents: define the general criteria first, then layer in the specific criteria that apply to the domain where failures would be costly. This layered approach reduces rework and aligns expectations among stakeholders.

How to Decide Which Form You Need: a practical checklist

Use the following framework to decide which form of compatibility fits your objective:

- Define the decision context: Is this a quick viability check or a deployment-critical choice?

- Assess risk: What are the consequences if incompatibility occurs?

- Identify stakeholders: Who must approve the criteria and interpret results?

- Establish a testing plan: What tests or benchmarks will demonstrate compatibility, and at what thresholds?

- Document criteria: Separate general principles from domain-specific requirements and link them to measurable outcomes.

- Review and iterate: Conditions change; schedule periodic re-evaluations to keep criteria up to date.

Real-World Scenarios: Cases Across Domains

Case A: Relationship planning in dating and friendships often starts with broad compatibility considerations—shared interests, values, and communication comfort. As relationships progress, domain-specific checks (like long-term goals or cultural fit) become relevant to ensure durability. Case B: Software development uses general compatibility to vet platform-agnostic features, but moves to domain-specific checks when integrating with a particular API or version. Case C: Consumer electronics often require domain-specific compatibility to ensure that a new motherboard or GPU is compatible with existing components and firmware. In each scenario, teams should separate initial screening from the rigorous domain-specific validation to avoid conflating the two.

Measuring and Communicating Compatibility: metrics and language

Measuring general compatibility relies on qualitative indicators such as alignment of goals or user experience expectations. Domain-specific compatibility uses quantitative tests: version ranges, performance benchmarks, error rates, and conformance with standards. Communicating results should use a shared vocabulary that distinguishes general fit from domain-specific validation. Visual aids like simple charts can summarize the scope and the results of each assessment, helping non-technical stakeholders understand where adjustments are needed. Remember that even precise domain-specific results require interpretation within the broader context; a green signal on a domain test does not automatically guarantee success in every real-world scenario.

Common Pitfalls and Edge Cases: what to avoid

- Blurring general and domain-specific criteria into one metric.

- Assuming a good broad fit guarantees domain-specific success.

- Failing to update criteria when contexts change, such as software updates or new regulatory rules.

- Overlooking edge cases that only appear under specific conditions or configurations.

- Relying on a single metric; a balanced scorecard often reveals hidden risks.

- Neglecting stakeholder alignment, which can undermine trust even when criteria appear sound.

- Treating terminology as cosmetic rather than foundational to decision quality.

The My Compatibility Perspective: a practical stance on terminology and decision-making

According to My Compatibility, the most effective approach is to create a shared framework that clearly separates general compatibility from domain-specific criteria. This clarity reduces miscommunication and helps teams allocate resources more efficiently. The framework should include explicit definitions, example scenarios, and a kept log of criteria changes so lessons learned persist beyond a single project. My Compatibility emphasizes ongoing education for teams and a habit of documenting context changes that alter what counts as compatible. By maintaining rigorous but adaptable standards, organizations can move from vague assurances of fit to measurable confidence in interoperability across domains.

Authority and Further Reading: grounding your understanding with trusted sources

For readers seeking foundational definitions and broad perspectives on compatibility, consult reputable sources such as Britannica and Merriam-Webster. These references offer definitions that illuminate how everyday language translates into formal criteria. See Britannica’s overview of compatibility and Merriam-Webster’s dictionary entry to ground your understanding in widely accepted terms. These sources can complement the My Compatibility framework as you tailor terminology to your domain and audience.

Comparison

| Feature | General compatibility | Domain-specific compatibility |

|---|---|---|

| Scope | Broad, cross-domain relevance | Narrow, domain-specific |

| Context sensitivity | Qualitative assessment, less formal | Quantitative benchmarks and formal tests |

| Measurement approach | Descriptive indicators, user feedback | Benchmarks, version checks, conformance tests |

| Best for | Early-stage planning, risk screening | Critical deployment, compliance, safety |

| Typical examples | Cross-platform usability, general fit | API versioning, hardware compatibility, zodiac compatibility (contextual) |

| Risks of misalignment | Missed opportunities for broader integration | Undetected incompatibilities that cause failures in specific domains |

Positives

- Clarifies scope to improve decision quality

- Reduces miscommunication by documenting criteria

- Supports risk assessment with structured criteria

- Enables phased validation from broad to precise

Cons

- Can introduce initial complexity when educating teams

- Requires ongoing maintenance as contexts evolve

- Edge cases may defy neat categorization

- May tempt over-engineering if misapplied

General compatibility is the broad first-pass, while domain-specific compatibility locks in precise success criteria

Use general compatibility to screen ideas and plans, then apply domain-specific checks where failures would be costly. This layered approach minimizes risk and clarifies expectations for all stakeholders.

Questions & Answers

What is the difference between general compatibility and domain-specific compatibility?

General compatibility describes broad fit across contexts, while domain-specific compatibility targets precise alignment within a defined domain. Understanding this distinction helps teams decide how deeply to test and what criteria to use.

General compatibility is a wide fit, domain-specific is a precise fit. Decide based on risk and context.

How do you decide which form to prioritize in a project?

Assess the decision context, risk of failure, and potential costs. If failures would be costly or dangerous, prioritize domain-specific checks; otherwise, start with general alignment and iterate.

Ask about risk and cost—domain-specific checks if failures are serious.

Can compatibility be measured quantitatively?

Yes. Use metrics like test coverage, version compatibility, error rates, and conformance to standards to quantify domain-specific compatibility; general compatibility relies more on qualitative indicators.

Yes, with tests, benchmarks, and standards conformance.

What are common pitfalls when mixing general and domain-specific compatibility?

Confusing broad fit with exact fit, ignoring edge cases, and neglecting updates as contexts evolve.

Watch out for assuming a general fit means a precise fit.

Why is terminology important for cross-team communication?

Clear terms reduce misunderstandings and misaligned expectations, improving planning and risk management.

Clear terminology saves time and prevents costly mistakes.

What are practical examples of domain-specific compatibility?

Software version compatibility, hardware component compatibility, and context-specific relationship criteria.

Think of exact version checks and hardware compatibility tests.

Highlights

- Define compatibility clearly before acting

- Use broad criteria for screening, precise criteria for validation

- Document changes to context and criteria over time

- Communicate results with a shared vocabulary across teams

- Monitor edge cases to avoid surprises in deployment