How to Match Compatibility: A Practical Guide for All

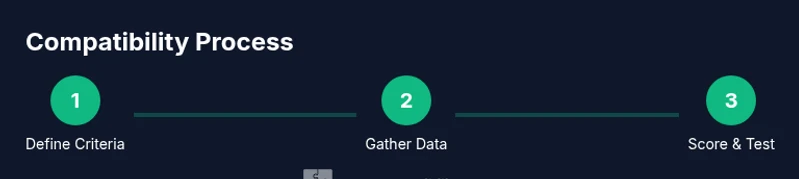

Learn how to match compatibility across zodiac signs, devices, and relationships with a practical, step-by-step approach. Define criteria, collect data, test quickly, and revise for durable alignment with real-world applicability.

Matching compatibility means evaluating how well two elements align across domains like zodiac signs, devices, software, and relationships. Start by defining core criteria, mapping needs to capabilities, and using a structured rating to judge fit. Then run small tests or scenarios to validate alignment before making a commitment, and revisit the assessment as needs evolve. This approach keeps decisions practical and adaptable.

Foundations of Compatibility Matching

Matching compatibility is a disciplined way to forecast how well two things will work together over time. It is not about chasing perfection, but about building reliable alignment that scales with changing circumstances. The My Compatibility team emphasizes a clear, repeatable process: set anchor criteria, gather evidence from multiple sources, and apply a transparent scoring model. By treating compatibility as a living attribute, you create a framework you can reuse across zodiac insights, tech ecosystems, and everyday life. In practice, you’ll move from gut instinct to data-informed judgment, reducing risk and increasing the odds of durable harmony.

- Define the top three criteria that matter most in the given domain (for example: goal alignment, data format compatibility, or sign-partner interplay).

- Collect signals from both objective measurements and subjective impressions to form a well-rounded view.

- Set a realistic time horizon and plan to revisit your assessment as needs evolve.

Defining Core Criteria Across Domains

A robust compatibility assessment begins with clearly articulated criteria that you can measure. Across zodiac, devices, and relationships, your criteria should capture both functional fit and experiential harmony. Start with three categories: core performance (does it work as intended?), reliability (how often does it break or drift from expectations?), and adaptability (can it adjust when circumstances change?). Translate each criterion into concrete indicators: numerical scores, observable behaviors, or verifiable data points. Document why each criterion matters and how you would quantify success. This disciplined framing helps prevent scope creep and bias, making the process fair and repeatable. Tip: use a simple scoring rubric (0-5) so different domains stay comparable.

- Prioritize criteria that affect long-term outcomes, not just initial impressions.

- Choose indicators that are easy to observe and verify over time.

- Align scores with your personal or organizational values to keep choices meaningful.

Assessing Zodiac Compatibility

Zodiac compatibility offers a lens into personality tendencies, communication styles, and values. While it should not be the sole determinant of compatibility, it can shape expectations and reduce surprises. To assess zodiac fit, pair sun sign traits with rising and moon profiles when available, and map these traits to your three core criteria. For example, if communication clarity is essential, compare typical communication styles and conflict resolution approaches. Keep astrologically derived insights as directional guidance, supplemented by behavior-based evidence. My Compatibility’s approach integrates tradition with empirical checks to avoid overreliance on astrology alone.

- Use zodiac traits to generate testable hypotheses (e.g., “we’re both decisive, so we should coordinate quickly”).

- Combine sign-based expectations with real-world performance data and conversations.

- Revisit zodiac considerations after a trial period to see if initial assumptions hold.

Assessing Device and Software Compatibility

Tech compatibility requires translating user needs into technical criteria. Focus on data formats, interoperability, security requirements, and user experience. Start by listing your must-haves (e.g., file format support, platform compatibility, API availability) and nice-to-haves (e.g., optional plugins, accessibility features). Then verify each item with vendor documentation, user reviews, or hands-on testing. Use standardized tests to simulate real workloads: data transfer, feature usage, and error handling. The result should be a scored comparison that highlights gaps and paths to remediation. Consistency across device types matters as much as raw capability.

- Create a compatibility matrix that maps needs to specifications.

- Confirm essential features work under realistic conditions before purchase or deployment.

- Plan for future upgrades by selecting forward-compatible options.

Real-World Scenarios and Experiments

Theory only goes so far; practical testing reveals how compatibility behaves under pressure. Design small, safe experiments to validate your criteria. For example, in a zodiac-related pairing, test communication during a simulated decision-making scenario; for devices, run a workflow that combines multiple apps simultaneously; for software ecosystems, perform an end-to-end task across integrations. Document results with timestamps, observations, and any deviations from expectations. Treat each test as data that informs adjustment of criteria, scoring, and future decisions. This empirical loop keeps your judgments grounded and actionable.

- Use a controlled, repeatable test protocol to compare options.

- Record outcomes and nudge criteria as you learn more.

- Schedule periodic re-tests to account for changes in needs or environments.

Tools and Frameworks You Can Use

A practical compatibility program relies on simple yet effective tools. Build a one-page assessment worksheet to capture criteria, indicators, and scores. Use a shared template so teams can contribute consistently. For analysis, a basic scoring rubric with per-domain weights helps you see where trade-offs matter most. If you want more structure, adopt a lightweight decision framework (e.g., weighted scoring, decision matrix, or risk-benefit analysis). The objective is to make the process transparent and repeatable, not bureaucratic. My Compatibility provides templates and examples that you can adapt to your situation.

- Compatibility worksheet with a 0-5 rating scale.

- A live document or spreadsheet to track criteria and results.

- A pilot testing plan with defined success metrics.

Common Pitfalls and How to Avoid Them

Avoid common mistakes that derail compatibility efforts. Do not rely on a single signal or first impression to judge fit, as early signals can be misleading. Beware overfitting your criteria to a single scenario; long-term needs may evolve. Don’t neglect soft factors like user experience, trust, and alignment of values. Finally, resist the urge to complete the assessment too quickly; give yourself time to observe patterns and iterate on your criteria. The My Compatibility framework emphasizes humility, data, and adaptability as the keys to durable alignment.

- Don’t hinge selection on a single success moment.

- Don’t ignore potential future changes to needs.

- Don’t skip documentation or revision after initial results.

Integrating Learnings into Daily Practice

To turn theory into daily practice, make compatibility a standing item in decision reviews. Build a living reference that evolves with new data, experiences, and feedback. Use weekly or monthly check-ins to re-evaluate criteria, update scores, and adjust plans. Keep stakeholders informed with transparent dashboards and concise summaries. The target is to create a culture where compatibility thinking becomes second nature, rather than a one-off exercise. With consistent practice, you’ll improve outcomes across zodiac insights, gadget choices, and relationship decisions.

- Schedule regular calibration sessions and update criteria as needed.

- Share results with stakeholders to foster alignment.

- Treat compatibility as a journey, not a one-time snapshot.

Tools & Materials

- Compatibility assessment worksheet (printable or digital)(Index scoring grid and criteria checklist)

- Notebook or digital notes app(Capture observations and changes over time)

- Access to reference materials (books, guides, or vendor docs)(To verify criteria and specs)

- Timer or stopwatch(Track time for experiments)

- Pen, highlighter, and color-coded sticky notes(For marking priorities)

Steps

Estimated time: 60-120 minutes

- 1

Define core criteria

List the top 3-5 criteria that truly matter in the domain. Provide measurable signals for each criterion so you can compare options fairly.

Tip: Be specific about what success looks like for each criterion. - 2

Gather data across domains

Collect objective data and subjective observations for every criterion. Use multiple sources and avoid relying on a single data point.

Tip: Record sources and timestamps for traceability. - 3

Score and compare options

Apply a uniform 0-5 scale to each criterion, weight them if needed, and compute total scores to rank options.

Tip: Normalize scores so cross-domain comparisons stay valid. - 4

Run small tests or pilots

Implement brief experiments or pilots to validate assumptions. Document outcomes and any deviations from expectations.

Tip: Limit risk and budget; keep tests reproducible. - 5

Reassess criteria and adjust

Review results after a defined period; revise criteria and weights if needed to reflect new information.

Tip: Avoid overfitting to a single scenario. - 6

Document learnings and create a living reference

Store results in a central repository and update it as needs evolve. Use versioning to track changes.

Tip: Treat the reference as a dynamic tool, not a static report.

Questions & Answers

What does matching compatibility really mean in practice?

It means assessing how well two elements fit across defined criteria and time horizons, using data and tests to forecast future performance.

Compatibility means checking how well two things fit across clear criteria and over time.

Can zodiac signs alone determine compatibility?

No. Zodiac insight should be combined with measurable signals and real-world observations to avoid over-reliance on astrology.

Zodiac clues help, but real data matters more.

How long does a basic compatibility assessment take?

A solid initial pass typically takes 1-2 hours, with follow-up reviews as needs change.

Plan for about an hour or two for the first pass.

What tools make compatibility work easier?

A simple scoring worksheet, data collection templates, and a pilot-testing plan greatly improve reliability.

Use a scoring sheet and a few quick tests.

What are common mistakes to avoid?

Relying on a single signal, ignoring long-term changes, and failing to document results can derail alignment.

Don’t rely on one signal and always test over time.

Watch Video

Highlights

- Define clear criteria before evaluating options

- Combine objective data with subjective insights

- Test assumptions with small, safe experiments

- Keep criteria dynamic and revisable

- Document learnings for future decisions