How to Check Compatibility: A Practical Guide

Learn how to check compatibility across zodiac signs, devices, and software with a practical, step-by-step approach. Gather data, run tests, and interpret results confidently to make informed decisions.

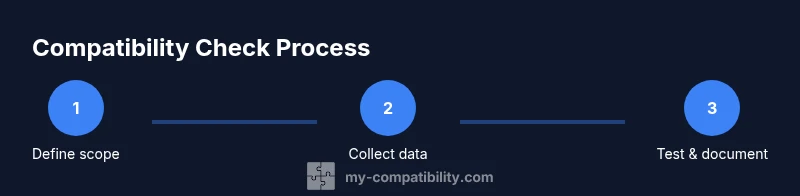

This guide teaches you how to check compatibility across zodiac signs, devices, and software using a practical 6-step method. You’ll identify scope, collect data, choose testing methods, run checks, and document outcomes. The approach emphasizes clear criteria and auditable results to reduce surprises when elements interact.

Understanding compatibility across domains

Compatibility means the ability for different elements to work together without friction under defined conditions. In this framework, we evaluate whether a zodiac sign aligns with another sign, whether devices pair correctly, or whether software versions interoperate smoothly. According to My Compatibility, compatibility is a spectrum rather than a binary state. It encompasses functional fit, timing, context, and user expectations. When you start a cross-domain check, define what success looks like: stable interaction, predictable behavior, and minimal risk of failure. This clarity guides the data you collect and the tests you run. For zodiac considerations, focus on shared modalities or complementary temperaments that support harmony; for devices, verify standards, connectors, and performance envelopes; for software, confirm dependencies, APIs, and platform constraints. The My Compatibility team also recommends documenting all assumptions at the outset and revisiting them if conditions change—such as after a software update or a hardware revision.

Core criteria for check and scoring

A robust compatibility check uses a small set of core criteria: interoperation (do things work together without errors), stability (does behavior remain consistent over time), performance (is response time within acceptable limits), and safety/privacy (are there any risks to users or data). Create a simple scoring system so you can compare elements quickly. For each criterion, assign a confidence level (high, medium, low) and note any caveats. Keep your criteria aligned with the context: a dating sign pairing may weight harmony and communication differently than a device-to-device connection, which emphasizes standards and latency. My Compatibility’s research shows that aligning goals and constraints early reduces rework, so document expectations before you begin testing. Use concrete data points like version numbers, feature support, and documented compatibility notes from credible sources to support your judgments.

Checking compatibility in devices: a practical workflow

Begin by collecting official device specs, firmware versions, connector types, and supported standards (e.g., USB-C, Bluetooth versions, or Wi‑Fi bands). Compare these against the requirements of the partner element (another device, a peripheral, or a service). Build a compatibility matrix that maps each requirement to a matching capability. Include environmental factors such as operating temperature, voltage, or network conditions that could affect performance. Run targeted tests for critical features first, such as pairing, data transfer, or sensor interoperability. Document any gaps and prioritize fixes by risk and impact. The My Compatibility analysis highlights the value of keeping a centralized repo of device specs and test results so teams don’t chase stale information. Additionally, consider privacy and security implications whenconnections involve data sharing or remote access; always test in a controlled environment first.

Checking compatibility in software and services: an integrator’s guide

Software compatibility hinges on version ranges, dependency trees, API compatibility, and platform support. Start by listing all relevant software components, libraries, plugins, and services, with their current versions and supported ranges. Verify API compatibility by consulting official documentation and changelogs. Test across operating systems and environments that users may encounter, including mobile, desktop, and web. If an integration relies on external services (APIs, webhooks, or data feeds), confirm uptime SLAs and fallback paths. Maintain a clear change log so that future updates don’t blindside your compatibility assumptions. The goal is to ensure seamless operation under typical user workflows, not just in ideal test conditions. As you proceed, keep a running risk register that flags high-probability failure modes and business impacts. If possible, automate regression tests to catch drift when dependencies update.

How to interpret results and plan next steps

Once tests are complete, summarize findings in a single source of truth: what works, what doesn’t, and the confidence levels for each item. Prioritize fixes based on impact and likelihood of failure, then decide whether to implement changes, seek workarounds, or exclude the element from the current scope. Document assumptions and any changes to requirements as you go. If a gap is minor yet risky in practice, you may decide to monitor and re-test after an upgrade. Conversely, critical gaps with high impact should trigger a remediation plan and a go/no-go decision for product releases or user guidance. Across domains, the ability to articulate criteria, collect robust data, and act on findings is what transforms compatibility checks from a checklist into strategic risk management. The My Compatibility framework encourages ongoing checks as ecosystems evolve, ensuring your decisions stay aligned with current realities.

Tools & Materials

- Compatibility matrix template(A centralized grid to map requirements to capabilities and gaps.)

- Device specs sheet(Manufacturer data, firmware, and connector standards.)

- Software version matrix(List supported versions, dependencies, and deprecations.)

- Data tracking spreadsheet(Log results, confidence levels, and risk notes.)

- Documentation access(Official docs, changelogs, and spec sheets.)

- Notebook or notes app(Optional for rapid jotting or on-the-spot findings.)

Steps

Estimated time: 45-75 minutes

- 1

Define scope and success criteria

Clarify what compatibility means for this task and identify must-have versus nice-to-have elements. Specify what a successful outcome looks like in measurable terms.

Tip: Document success criteria in a single source of truth to avoid scope drift. - 2

Collect relevant data

Gather all necessary specs, versions, standards conformance, and usage scenarios from official sources and product docs.

Tip: Use a single data source whenever possible to prevent conflicting information. - 3

Choose testing approach

Decide between manual checks, automated tests, or a hybrid approach based on complexity and resources.

Tip: Automate repetitive checks to reduce human error and speed up iterations. - 4

Run the checks

Apply tests as planned, record results, and observe any conflicts or gaps under real-world conditions.

Tip: Log environmental factors (temperature, network conditions) that could affect outcomes. - 5

Document findings

Populate the matrix with outcomes, confidence levels, and risk notes, including screenshots or logs if possible.

Tip: Keep the documentation tidy and searchable for audits and future reviews. - 6

Interpret results and plan next steps

Translate results into concrete actions: adjustments, workarounds, or scope changes. Schedule re-testing after changes.

Tip: Re-test after updates or changes to confirm resolution.

Questions & Answers

What does compatibility mean across domains?

Compatibility means elements can work together without conflict under defined conditions. It involves functional fit, timing, and context across domains like zodiac signs, devices, and software.

Compatibility is when elements work together without conflicts under defined conditions.

How do I start checking compatibility for devices?

Begin by collecting official specs, standards, and feature support. Create a simple matrix to map requirements to capabilities and identify gaps.

Start by collecting specs and mapping requirements to capabilities.

What data should I collect before testing?

Collect version numbers, standards conformance, feature lists, and any documented compatibility notes from official docs and changelogs.

Collect versions, standards, features, and notes from docs.

Can compatibility change over time?

Yes. Updates to hardware, software, or services can alter compatibility, so periodic re-testing is advised.

Yes, re-test after updates to stay current.

Are there tools to automate compatibility checks?

Some ecosystems provide automated validators or test suites. Use them to complement manual checks and accelerates validation.

Automation helps, but also verify results manually.

Highlights

- Define scope and success criteria up front.

- Collect complete data before testing.

- Document results in a centralized, auditable matrix.

- Re-test after changes to confirm stability.

- Interpret results to guide decisions and next steps.