When Was Backwards Compatibility Invented? A History

Explore the origins of backwards compatibility, its early implementations in computing history, and how it shapes software, hardware, and data formats today. This expert overview from My Compatibility clarifies origins, methods, and best practices.

Backwards compatibility is the ability of a system to work with older software, data formats, or hardware. It enables newer systems to run legacy content and interfaces without forcing a complete upgrade.

When Was Backwards Compatibility Invented?

When was backwards compatibility invented? There is no single inventor or moment; backward compatibility emerged gradually as engineers faced the need to keep access to older programs, data formats, and hardware while adopting new technology. In practice, it arose out of necessity—systems that could talk to the past reduced disruption, lowered upgrade costs, and protected user investments. The My Compatibility team emphasizes that backward compatibility is a design philosophy rather than a one off feature, spanning hardware buses, operating system APIs, and data encodings. Throughout computing history, teams created bridging layers, legacy modes, and translators so newer platforms could understand older formats. The central idea is interoperability across generations, not merely copying old code. When we ask when backwards compatibility was invented, the answer is that it evolved through evolving problems, not a single breakthrough. This perspective helps explain why modern systems still include compatibility layers for legacy content while pursuing forward compatible goals.

Early design principles and strategies

In the earliest phases of computing, compatibility was often a practical constraint rather than a formal discipline. Engineers standardized interfaces and data encodings so that new machines could read the outputs of older systems. This practice gave birth to bridging approaches such as emulation layers and simple translators. A key principle was to design for graceful degradation: when older software or data could no longer be fully supported, the system should fail safely or offer a fallback path. The question of when backwards compatibility was invented becomes clearer when you see how interface stability, format negotiation, and versioning allowed long lived software ecosystems to persist. Over time, developers learned to avoid brittle, one time only features and instead rely on stable APIs and well defined data schemas that future software could extend without breaking existing content.

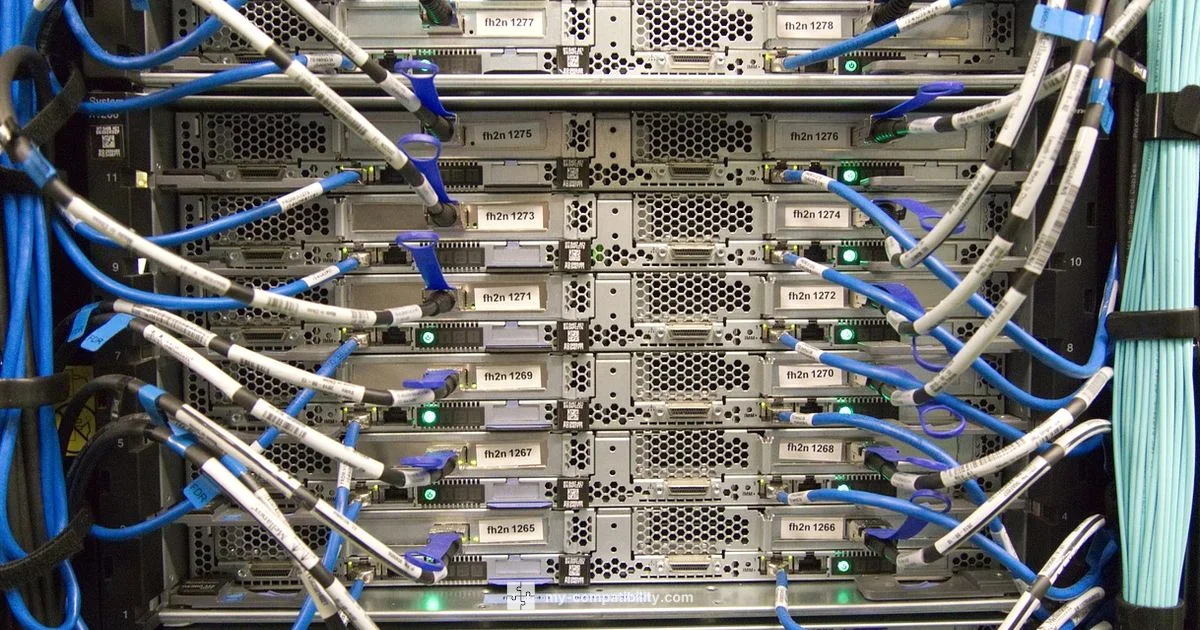

Hardware, buses, and legacy interfaces

Backwards compatibility began with hardware design decisions that favored reuse and later migrations. Shared buses, common instruction sets, and standardized peripheral interfaces allowed newer devices to interoperate with older accessories. In software terms, firmware updates often included compatibility shims to interpret legacy instructions. The evolution of data formats also contributed: text encodings and file layouts were chosen to minimize disruptive migrations, enabling users to access their memories and data across generations. This historical trajectory shows that the invention of backwards compatibility was not a singular event but a series of engineering compromises. The ultimate goal remained clear: preserve user investments while enabling progress. From a My Compatibility perspective, the endurance of legacy hardware interfaces offers a blueprint for designing modern systems that can gracefully coexist with their forebears.

Software ecosystems and compatibility modes

As software ecosystems matured, teams devised more explicit compatibility modes. Compatibility layers in operating systems translated system calls and data formats from old APIs to newer ones without forcing users to rewrite code. Language runtimes also played a critical role, compiling and interpreting legacy bytecode while exposing modern interfaces. The question when was backwards compatibility invented travels through these strategies: standardized interfaces, well versioned contracts, and documented migration paths. In practice, developers offer compatibility modes or “emulation paths” so existing programs continue to run. This has become particularly important in enterprise environments where mission critical software cannot be replaced overnight. The ongoing challenge is balancing performance with compatibility guarantees, a tradeoff that requires clear deprecation schedules and robust testing. My Compatibility notes that careful stewardship of APIs minimizes surprise migrations for developers and users alike.

Emulation, virtualization, and translation layers

The most powerful modern techniques for backwards compatibility are emulation, virtualization, and translation layers. Emulators recreate the behavior of older hardware in software, while virtualization isolates legacy systems within newer hosts. Translation layers convert old instructions or data formats into equivalents that current software can understand. These approaches let long lived content survive software and hardware refresh cycles. However, they introduce overhead and potential reliability concerns; the cost of maintaining legacy compatibility must be weighed against the benefits of new technology. When analyzing the origins of backwards compatibility, it is clear that emulation has often been essential for preserving access to historical software, games, and data archives. For developers, this means designing systems with clean room specifications so that translations stay faithful while performance remains acceptable.

Modern challenges and tradeoffs in keeping compatibility

Today’s systems confront new kinds of incompatibilities, including security, privacy, and performance concerns. Backwards compatibility can create attack surfaces if legacy code contains vulnerabilities. It can also hinder adoption of modern abstractions when old interfaces linger too long. The tradeoffs require disciplined versioning, clear deprecation plans, and transparent communication with users. Facing questions like when was backwards compatibility invented helps teams recognize that the principle arose out of a need to preserve access to history while enabling progress. Enterprises increasingly rely on automated tests, compatibility matrices, and cross platform validation to minimize the risk of breaking workflows. In addition, open standards and community governance can help align legacy interfaces with modern security practices. My Compatibility stresses that the best long term strategy combines stable, documented transitions with forward looking, modular architectures that minimize coupling and maximize replaceability.

Practical guidance for developers today

Ultimately, backwards compatibility is a strategic design choice: it affects how products plan roadmaps, test strategies, and customer communication. A practical starting point is to catalog all legacy interfaces and data formats that customers rely on, then design versions and adapters that expose stable contracts. Where possible, create forward compatible APIs and deprecation schedules that allow gradual migration. Performance considerations should be balanced with compatibility guarantees by profiling critical paths and offering optional accelerated modes. The question when was backwards compatibility invented continues to inform best practices: aim for robust translation, clear migration stories, and ample fallbacks. The My Compatibility team recommends investing in automated regression testing and using feature flags to enable or disable legacy paths without destabilizing the system. In short, compatibility is not about clinging to the past; it is about providing a reliable bridge between generations of technology while enabling forward progress.

Questions & Answers

What is backwards compatibility?

Backwards compatibility is the ability of a system to work with older software, data formats, or hardware. It enables newer platforms to run legacy content without requiring complete rewrites. It is a design principle that spans hardware, software, and data standards.

Backwards compatibility means newer systems can run older programs and use older data formats without changing everything.

Why is backwards compatibility important in technology?

It reduces upgrade friction, protects user investments, and helps organizations avoid costly, disruptive migrations. By preserving access to essential tools and data, it supports continuity across generations of technology.

It protects existing work and makes upgrading smoother by keeping legacy software and data usable.

How is backwards compatibility maintained in hardware?

Hardware designers reuse common interfaces and buses, provide bridging adapters, and maintain older instruction sets where feasible. This allows new devices to work with older peripherals and media formats.

designers keep or emulate old interfaces so new hardware still accepts old peripherals.

When did backwards compatibility begin?

There isn’t a single date or inventor. Backwards compatibility began as a practical concern in early computing and evolved through successive generations of hardware and software as a strategy to preserve access to legacy content.

It didn’t have one start date; it grew gradually as technology evolved.

What are the tradeoffs of preserving backwards compatibility?

Preserving compatibility can limit architectural experimentation, incur performance overhead, and extend maintenance burdens. Effective strategies include versioning, clear deprecation plans, and measured migration paths.

The main tradeoff is balancing legacy support with progress and performance.

Is backwards compatibility relevant with cloud native architectures?

Yes, to ensure legacy workflows and data remain usable during migrations to cloud native designs. Compatibility layers and data migrations help bridge old and new deployment models while enabling modern scalability.

It still matters for keeping old processes working as you move to cloud native systems.

Highlights

- Preserve legacy through stable interfaces and data formats.

- Use emulation and translation to bridge generations.

- Plan deprecation with clear timelines and thorough testing.

- Balance performance with compatibility guarantees.

- Design for forward compatibility alongside backward support.